Eveee Crash Course

A new age is upon us. Internal is dead, and we have a shiny new engine to replace it. Moving to a modern, realtime engine isn’t that easy though, there’s a lot to learn. This quick intro will help ease you into the age of Eevee. Since this is only a quick overview, I won’t be getting too in-depth about all the different settings and algorithms behind them. I’m also skipping materials since the workflow in Eevee is not that different from Cycles. We build materials with node networks and use (almost) the same set of nodes.

I’ve also included links with more information for some of the new techniques and algorithms. Check them out if you want to learn more. If you are into code you can also browse the source code.

Coming from Cycles

What are the differences?

Cycles is a unbiased ray tracer. Ray tracing is a rendering technique based on tracing the path of rays of light in a scene. Rays and materials follow the rules of physics so the way different materials react to light is physically accurate. This method produces highly realistic images but takes a long time to render (as we already know 🙂 ).

On the other hand, Eevee is a rasterizer that uses realtime rendering techniques, much like game engines. Rasterization is a technique where the scene’s geometry is projected onto pixels. The color of each pixel is determined by the shader code of the material and it’s based on the surface’s normals and positions of the lights. On top of that, Eeevee uses additional techniques to make the result look better and simulate different effects.

Cycles and Eevee serve different needs. With Cycles you get realism, while Eevee gives you more flexibility and a faster workflow.

What nodes are not available?

Currently Eevee supports all materials nodes with the exception of the Velvet and Toon shaders. As a (very approximated) alternative to Velvet you can use the Principled shader. Just bump the sheen and roughness values.

To replace Toon we can now make your own ghetto toon shaders with the new shader-to-rgb node. Plug the output of any shader into a shader-to-rgb, then into a color ramp and finally into an emission shader with an intensity of 1 to get started. You can use this new node to create masks for light/shadow areas, feed color ramps for gradients and more. And of course you can mix this with the new Grease Pencil goodness in 2.80.

Jumping between engines

Eevee materials work with Cycles and vice-versa. Lights are a different story. Eevee doesn’t use nodes for lights like Cycles does so lighting can look a bit different in each engine. You might also have to tweak engine-specific parts like the different settings for volumetrics, DOF, etc.

Despite that, it’s actually a good workflow idea to start working with Eevee and move into Cycles for the more final renders. You can take advantage of the realtime workflow and then move into ray tracing for the final stretch. Note that you can also plug nodes to engine-specific outputs now. That means you can create multi-engine materials. Check the out the “target” dropdown in the output node.

Samples

In the world of Eevee “samples” mean Temporal Antialiasing Samples (TAA). TAA is new-ish AA technique used in modern game engines. It combines previously rendered frames to smooth out jagged edges. Eevee gets these “frames” by jittering the camera matrix (moving the camera randomly).

Setting this to 1 turns antialiasing off, since it only renders one frame/sample (but gives you a solid performance boost!). Note that this setting also clamps volumetric samples.

The wikipedia article on TAA will help you understand it better, as well as this nice summary of AA techniques. Also check out Sketchfab’s announcement on it.

Irradiance volumes

Irradiance volumes are a kind of light probe used for indirect lighting.

Light probes are a new object type unique to Eevee. They catch the scene’s enviroment for reflections or indirect lighting. Irradiance Volumes help approach global illumination by precalculating the intensity of the light. In other words, baking indirect lighting.

To start using them first add an irradiance volume to your scene and scale it to encompass the area where you want indirect lighting. Now bake it by going to the Indirect Lighting Panel in the properties editor. You can also enable auto baking, but watch out for slow downs if your scene is too heavy. Remember that more space covered by irradiance volumes means more baking time.

Looking further down in that panel you will also find Diffuse Bounces. Coming from Cycles you might be tempted to increase this, but the default (3) is already good enough for most cases. Diffuse Occlusion is the size of the shadow map of each irradiance sample. Irradiance samples form the volume and store incoming light for a specific point in space. We don’t need as much information for indirect lighting so the default of 32px is also good enough.

Shadows

ESM vs VSM

General shadow settings live in the Shadows panel in the render setttings and the first option you will notice there is Method. Clicking this dropdown presents two mysterious names:

- ESM (Exponential Shadow mapping)

- VSM (Variance Shadow Mapping)

Both ESM and VSM are techniques to filter shadowmaps to prevent aliasing. ESM uses an exponential mathematical function, while VSM stores extra information about depth allowing maps to be filtered in the GPU the way textures are (eg. mipmaps, gaussian blur, etc.).

When to use one or the other depends on your scene and is a subtle decision. VSM doesn’t suffer the same light bleeding artifacts ESM has and in simple scenes it can give you fuller shadows. However it can have some light bleed when the scene is too complex or objects are too close. Another downside of VSM is that it uses more RAM (since it stores more info).

You can read more on the juciy math behind ESM in the original paper. And of course, there is one for VSM too.

Controlling quality

Cube size and Cascade size are the size of the shadow maps for point/spot/area lamps and sun lamps respectively. Higher resolution maps will yield better results at the expense of performance. Another option is High Bitdepth, which will create 32-bit depth shadow maps that look better but take more RAM and processing power.

More shadow options

Check out your light’s properties. You will find another shadows panel with even more options. The options shown depend on the light type but we actually have a great deal of control on Eevee’s shadows. These settings are not global like the previous, they are specific to each light.

At the bottom you will notice two settings: Exponent and Bleed Bias. These two control the bias for reducing light bleed for ESM and VSM respectively. Use these to control how much light bleeds into the shadow, under or over-darkening the edges.

Reflections

Screen Space Reflections

Screen Space Reflection is a technique for reusing screen space data (AKA the stuff that’s visible) to calculate reflections. It works in a similar way to ray tracing, but intersecting rays with the depth buffer instead of actual geometry.

Screen Space Reflections can give us good looking reflections in realtime but they have one major downside though: they can only reflect what’s visible. They can’t reflect an object or part of it that is obscured, outside the field of view, facing away from the camera, etc.

The good news is that SSR can work together with the other probes. SSR gets priority, but if a ray misses Eevee will fall back to cubemaps and planar reflections for help.

This answser in StackXchange goes into more detail on how SSR works.

Cubemaps

A Cubemap is a type of light probe in Eeeve. They are used to capture the surroundings of objects for reflections. A single cubemap is a collection of six square textures that represent the reflections in an environment with the six squares form the faces of an imaginary cube. A cubemap probe represents an array of several cubemaps.

Wikipedia has an article about Cubemapping and you can also get some more info from Unity’s documentation.

Planar reflections

Planar reflections or Reflection planes are another kind of reflection probe. They are used for smooth planes like mirrors or windows. They do their magic by re-rendering the scene with a flipped camera and then placing the result in the probe’s area. Note that I said re-render. They will add to render times and decrease performance.

Also note that unless Screen Space Reflections is enabled, these planes will only work on specular surfaces with a roughness close to zero. On the other side, if SSR is enabled these probes act like team players and help accelerate the ray marching process, adding objects and data missing from view space.

To use the Planar Reflection probes place them slightly above the reflective surfaces and scale them to fill. Don’t get too close though, as it may capture the other side of the surface.

Check out Unreal Engine’s docs for more bits of information about this.

Volumetric

Volumetric settings

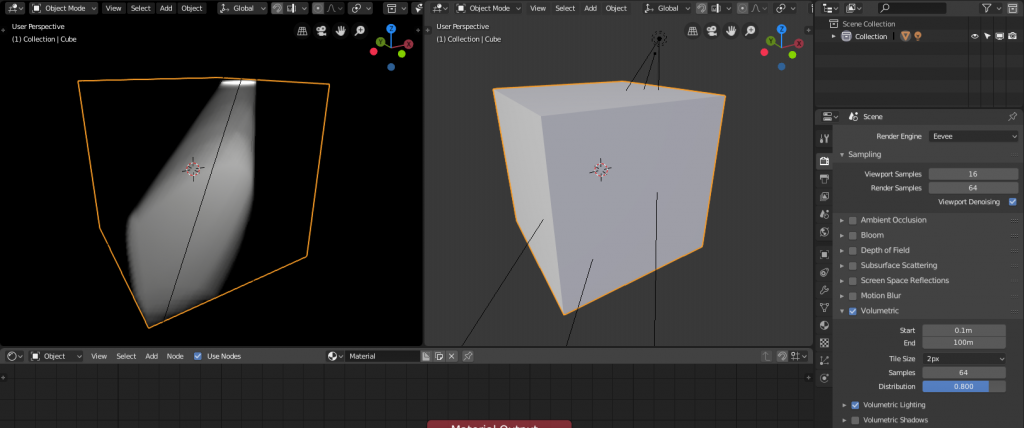

To see any volumetric materials you first need to enable Volumetric in the render settings. And of course you need to have a material (or world) with something plugged in the volume output.

You might notice it looks a bit choppy at first. Lowering the tile size or increasing the samples will improve the quality. You can also bump the samples but remember they will be clamped by the global samples.

Principled volumetric

The principled shader now has a volumetric cousin. This node simplifies volumetric materials the same way the Disney princess principled shader simplified PBR. It combines Volume Scatter, Volume Absorption and a few others. You can create fog, smoke, wisps and any other material you can think of with just this shader (and textures of course).

Post-processing effects

Depth of Field

Eevee’s DoF is not that different from Cycles, you will find Distance and F‑Stop in the camera properties. As usual you need to enable DoF in the render settings first. Note that the settings for Eevee and Cycles are two separate sets, they work the same way but the engines don’t share them. If you need to squeeze more performance or get more blur try playing with max distance in the render settings. Also, in case anyone is caught off-guard by this: this effect is only visible in camera view.

Bloom

Bloom is an effect that reproduces an optics artifact of real world cameras. It adds pools of light pouring from the borders of bright areas to create an illusion of overwhelming brightness.

Remember using the Glare node with Fog Glow in the compositor? Well, forget him. Bloom is our friend now. The effect is the same as what we used to do in the compositor but more flexible and realtime. Watch out though, it’s easy to abuse this effect and turn your render into a soup of near-white pixels.

That’s it for today! I will be posting more tutorials as I continue to use Eevee and find new stuff. These next months will be really exciting, as more people start using the engine and come up with new techniques and best practices. Stay tuned.